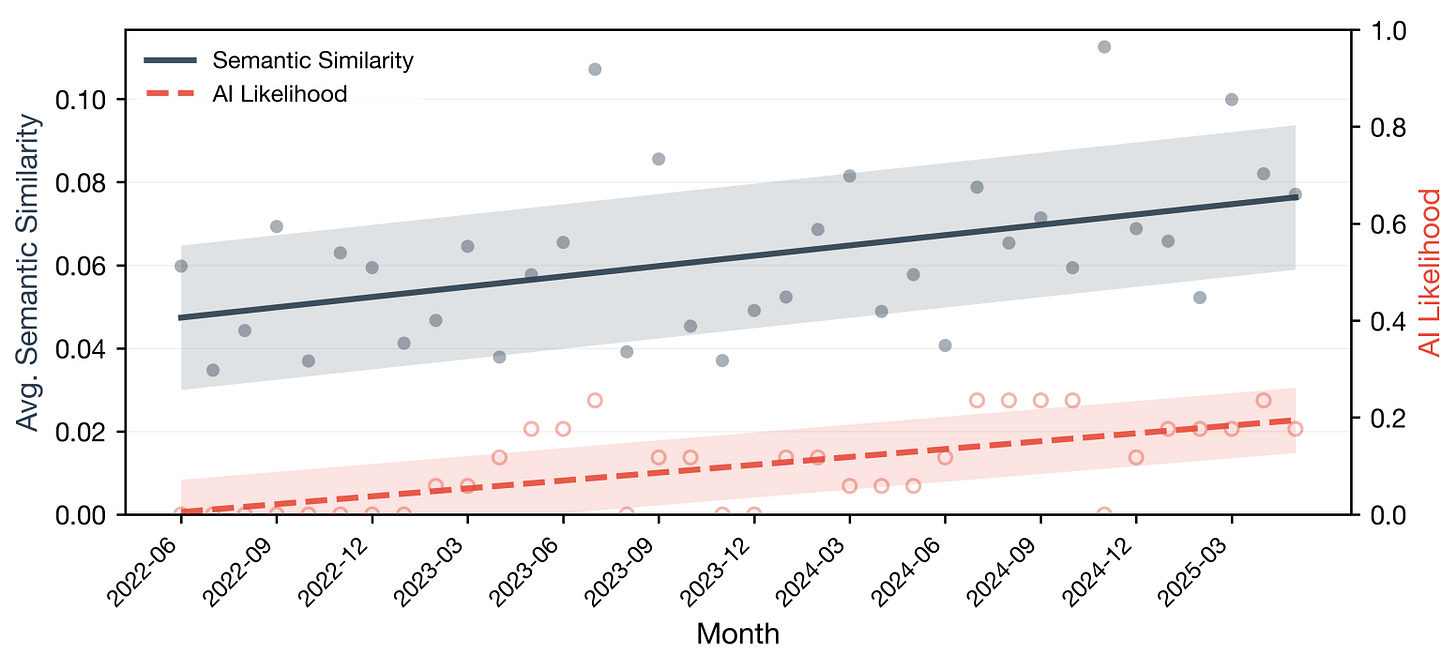

How much of the internet is now AI-generated?

Shell Game's technical advisor Maty Bohacek has an answer.

For some years, I’ve been preoccupied with the notion of “the inversion”: the moment on the internet after which more of the content we encounter will be bot-generated than human-generated. I first wrote about the inversion back in 2022, in a story about the digital fakery spawned by nascent AI image generators. The term originated with a group of YouTube engineers who were trying to detect and combat the rise of bot-driven traffic:

As far back as 2013, a team of engineers at YouTube hit upon a phenomenon it called “the inversion”: the point at which the fake content we encounter on the internet outstrips the real. The engineers were developing algorithms to distinguish between authentic human views and manufactured web traffic — bought-and-paid-for views from bots or “click farms.” Like the discriminator in a GAN, the team’s algorithms studied the traffic data and tried to understand the difference between normal visitors and bogus ones.

Blake Livingston, an engineer who led the team at the time, told me the algorithm was working from a key assumption: “that the majority of traffic was normal.” But sometime in 2013, the YouTube engineers realized bot traffic was growing so rapidly that it could soon surpass the human views. When it did, the team’s algorithm might flip and start identifying the bot traffic as real and the human traffic as fake.

Even then, pre-ChatGPT, it seemed possible the inversion had already arrived online. (“Dead internet theory,” a larger and more conspiratorial version of the inversion, is a commonly-cited belief online.) But there was no way to measure it.

Either way, it seemed to me that a coming inversion of trust was a problem we would all soon be facing, even beyond the internet. We travel through the world largely assuming that the people we encounter—and the images, videos, and texts created by and of those people—are real.1 But as generative AI both improves and spreads, there exists a potential that this assumption could (or at least should) vanish. We could instead begin to default to an expectation that human-like interactions—whether with a caller, a voice from the speaker at a fast food drive-through, an image or video in an ad, or one we scroll past on social media—are more likely to be AI than human. In the realm of telephone customer service, for example, many of us have already come to presume that any voice we hear has a decent chance of being AI-generated, no matter how realistic.

The inversion popped up again in the final episode of Season 1 of Shell Game. When I started using an AI clone to call my friends and family, it upended some of their own trust about who or what was on the other end of the line. My friend Keegan Walden (who would go on to star in Season 2, as Kyle’s startup coach) found that even afterward, talking to the real me, he was left with the feeling that there was a 10 percent chance that I’d just sent a better clone. “I was 90 percent sure, but 10 percent of uncertainty is a lot of uncertainty,” he said. “And so now I just have this fundamental distrust that’s kind of lingering in the background in our relationship every time we talk.”

Whereas I’ve taken an immersive approach to exploring the inversion, Shell Game technical advisor, Stanford AI researcher, and all-around genius Maty Bohacek has taken a systematic one.